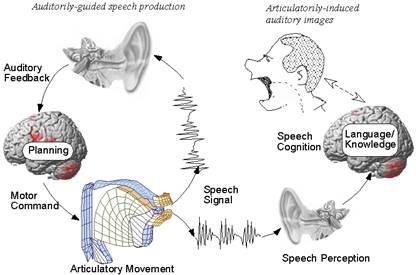

Furthermore, the model of late multisensory convergence is being challenged by growing evidence that multisensory interactions can take place at early stages of processing, both in terms of anatomical organization and timing of activations (for review, see Bulkin and Groh, 2006 Ghazanfar and Schroeder, 2006). However, the poor temporal resolution of hemodynamic imaging prevents access to the temporal dynamics of the cross-modal processes, and thus to the neurophysiological mechanisms by which visual information can influence auditory processing. Indeed, this structure is known to respond to both visual and auditory inputs and has repeatedly been found to be active in functional magnetic resonance imaging (fMRI) studies of audiovisual integration of speech ( Miller and D'Esposito, 2005), as well as nonspeech stimuli ( Beauchamp et al., 2004). Visual influences in the auditory cortex would result from feedback projections from those polysensory areas ( Calvert et al., 2000 Miller and D'Esposito, 2005), particularly from the superior temporal sulcus (STS). The results have mainly been interpreted in an orthodox model of organization of the sensory systems, in which auditory and visual cues are first processed in separate unisensory cortices, and then converge in multimodal associative areas ( Mesulam, 1998). Indeed, several hemodynamic imaging studies in humans have shown that the auditory cortex can be activated by lip reading ( Paulesu et al., 2003), and is a site of interactions between auditory and visual speech cues ( Skipper et al., 2007). This suggests that visual information can access processing in the auditory cortex. Visual cues from lip movements can deeply influence the auditory perception of speech and, thus, the subjective experience of what is being heard ( Cotton, 1935 McGurk and MacDonald, 1976). These findings demonstrate that audiovisual speech integration does not respect the classical hierarchy from sensory-specific to associative cortical areas, but rather engages multiple cross-modal mechanisms at the first stages of nonprimary auditory cortex activation. Audiovisual interactions in the auditory cortex, as estimated in a linear model, consisted both of a total suppression of the visual response to lipreading and a decrease of the auditory responses to the speech sound in the bimodal condition compared with unimodal conditions.

After this putatively feedforward visual activation of the auditory cortex, audiovisual interactions took place in the secondary auditory cortex, from 30 ms after sound onset and before any activity in the polymodal areas. We found that lip movements activate secondary auditory areas, very shortly (≈10 ms) after the activation of the visual motion area MT/V5. We recorded intracranial event-related potentials to auditory, visual, and bimodal speech syllables from depth electrodes implanted in the temporal lobe of 10 epileptic patients (altogether 932 contacts). However, they provide no information about the chronology and mechanisms of these cross-modal processes. Hemodynamic studies have shown that the auditory cortex can be activated by visual lip movements and is a site of interactions between auditory and visual speech processing.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed